The history of computer development is often referred to in reference to the different generations of computing devices. A generation refers to the state of improvement in the product development process. This term is also used in the different advancements of new computer technology. With each new generation, the circuitry has gotten smaller and more advanced than the previous generation before it. As a result of the miniaturization, speed, power, and computer memory has proportionally increased. New discoveries are constantly being developed that affect the way we live, work and play.

Each generation of computers is characterized by major technological development that fundamentally changed the way computers operate, resulting in increasingly smaller, cheaper, more powerful and more efficient and reliable devices. Read about each generation and the developments that led to the current devices that we use today.

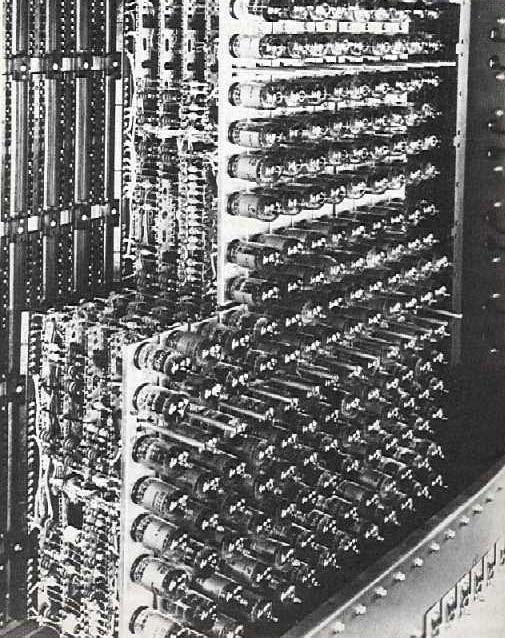

First Generation - 1940-1956: Vacuum Tubes

First Generation - 1940-1956: Vacuum Tubes

The first computers used vacuum tubes for circuitry and magnetic drums for memory, and were often enormous, taking up entire rooms. A magnetic drum,also referred to as drum, is a metal cylinder coated with magnetic iron-oxide material on which data and programs can be stored. Magnetic drums were once use das a primary storage device but have since been implemented as auxiliary storage devices.The tracks on a magnetic drum are assigned to channels located around the circumference of the drum, forming adjacent circular bands that wind around the drum. A single drum can have up to 200 tracks. As the drum rotates at a speed of up to 3,000 rpm, the device's read/write heads deposit magnetized spots on the drum during the write operation and sense these spots during a read operation. This action is similar to that of a magnetic tape or disk drive.

They were very expensive to operate and in addition to using a great deal of electricity, generated a lot of heat, which was often the cause of malfunctions. First generation computers relied on machine language to perform operations, and they could only solve one problem at a time. Machine languages are the only languages understood by computers. While easily understood by computers, machine languages are almost impossible for humans to use because they consist entirely of numbers. Computer Programmers, therefore, use either high level programming languages or an assembly language programming. An assembly language contains the same instructions as a machine language, but the instructions and variables have names instead of being just numbers.

Programs written in high level programming languages retranslated into assembly language or machine language by a compiler. Assembly language program retranslated into machine language by a program called an assembler (assembly language compiler).

Every CPU has its own unique machine language. Programs must be rewritten or recompiled, therefore, to run on different types of computers. Input was based onpunch card and paper tapes, and output was displayed on printouts.

The UNIVAC and ENIAC computers are examples of first-generation computing devices. The UNIVAC was the first commercial computer delivered to a business client, the U.S. Census Bureau in 1951.

Acronym for Electronic Numerical Integrator And Computer, the world's first operational electronic digital computer, developed by Army Ordnance to compute World War II ballistic firing tables. The ENIAC, weighing 30 tons, using 200 kilowatts of electric power and consisting of 18,000 vacuum tubes,1,500 relays, and hundreds of thousands of resistors,capacitors, and inductors, was completed in 1945. In addition to ballistics, the ENIAC's field of application included weather prediction, atomic-energy calculations, cosmic-ray studies, thermal ignition,random-number studies, wind-tunnel design, and other scientific uses. The ENIAC soon became obsolete as the need arose for faster computing speeds.

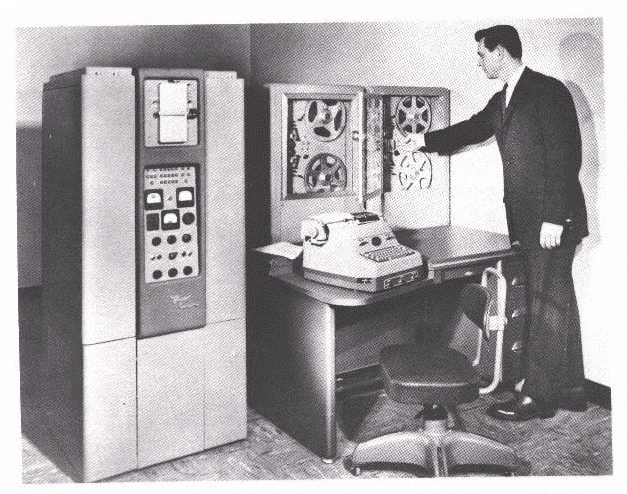

Second Generation - 1956-1963: Transistors

Second Generation - 1956-1963: Transistors

Transistors replaced vacuum tubes and ushered in the second generation computer. Transistor is a device composed of semiconductor material that amplifies a signal or opens or closes a circuit. Invented in 1947 at Bell Labs, transistors have become the key ingredient of all digital circuits, including computers. Today's latest microprocessor contains tens of millions of microscopic transistors.Prior to the invention of transistors, digital circuits were composed of vacuum tubes, which had many disadvantages. They were much larger, required more energy, dissipated more heat, and were more prone to failures. It's safe to say that without the invention of transistors, computing as we know it today would not be possible.

The transistor was invented in 1947 but did not see widespread use in computers until the late 50s. The transistor was far superior to the vacuum tube,allowing computers to become smaller, faster, cheaper,more energy-efficient and more reliable than their first-generation predecessors. Though the transistor still generated a great deal of heat that subjected the computer to damage, it was a vast improvement over the vacuum tube. Second-generation computers still relied on punched cards for input and printouts for output.

Second-generation computers moved from cryptic binary machine language to symbolic, or assembly, languages,which allowed programmers to specify instructions in words. High-level programming languages were also being developed at this time, such as early versions of COBOL and FORTRAN. These were also the first computers that stored their instructions in their memory, which moved from a magnetic drum to magnetic core technology.

The first computers of this generation were developed for the atomic energy industry.

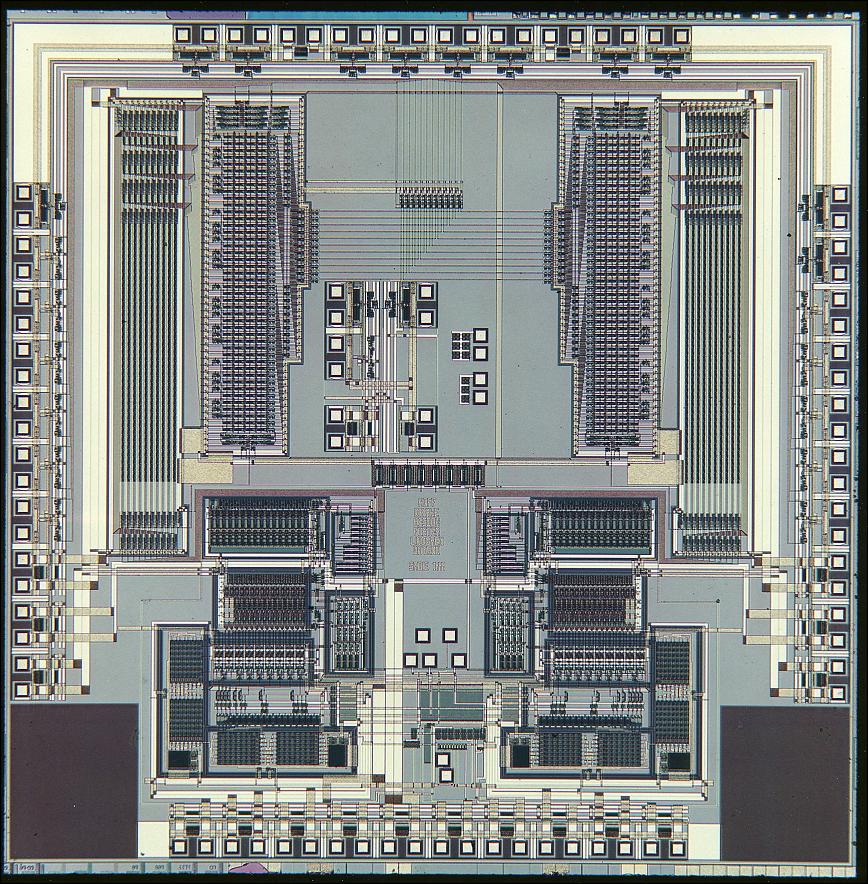

Third Generation - 1964-1971: Integrated Circuits

Third Generation - 1964-1971: Integrated Circuits

The development of the integrated circuit was the hallmark of the third generation of computers. Transistors were miniaturized and placed on silicon chips, called semiconductors, which drastically increased the speed and efficiency of computers.A nonmetallic chemical element in the carbon family of elements. Silicon - atomic symbol "Si" - is the second most abundant element in the earth's crust, surpassed only by oxygen. Silicon does not occur uncombined in nature. Sand and almost all rocks contain silicon combined with oxygen, forming silica. When silicon combines with other elements, such as iron, aluminum or potassium, a silicate is formed. Compounds of silicon also occur in the atmosphere, natural waters,many plants and in the bodies of some animals.

Silicon is the basic material used to make computer chips, transistors, silicon diodes and other electronic circuits and switching devices because its atomic structure makes the element an ideal semiconductor. Silicon is commonly doped, or mixed,with other elements, such as boron, phosphorous and arsenic, to alter its conductive properties.

A chip is a small piece of semi conducting material(usually silicon) on which an integrated circuit is embedded. A typical chip is less than ¼-square inches and can contain millions of electronic components(transistors). Computers consist of many chips placed on electronic boards called printed circuit boards. There are different types of chips. For example, CPU chips (also called microprocessors) contain an entire processing unit, whereas memory chips contain blank memory.

Semiconductor is a material that is neither a good conductor of electricity (like copper) nor a good insulator (like rubber). The most common semiconductor materials are silicon and germanium. These materials are then doped to create an excess or lack of electrons.

Computer chips, both for CPU and memory, are composed of semiconductor materials. Semiconductors make it possible to miniaturize electronic components, such as transistors. Not only does miniaturization mean that the components take up less space, it also means that they are faster and require less energy.

Instead of punched cards and printouts, users interacted with third generation computers through keyboards and monitors and interfaced with an operating system, which allowed the device to run many different applications at one time with a central program that monitored the memory. Computers for the first time became accessible to a mass audience because they were smaller and cheaper than their predecessors.

Fourth Generation - 1971-Present: Microprocessors

Fourth Generation - 1971-Present: Microprocessors

The microprocessor brought the fourth generation of computers, as thousands of integrated circuits we rebuilt onto a single silicon chip. A silicon chip that contains a CPU. In the world of personal computers,the terms microprocessor and CPU are used interchangeably. At the heart of all personal computers and most workstations sits a microprocessor. Microprocessors also control the logic of almost all digital devices, from clock radios to fuel-injection systems for automobiles.Three basic characteristics differentiate microprocessors:

- Instruction Set: The set of instructions that the microprocessor can execute.

- Bandwidth: The number of bits processed in a single instruction.

- Clock Speed: Given in megahertz (MHz), the clock speed determines how many instructions per second the processor can execute.

What in the first generation filled an entire room could now fit in the palm of the hand. The Intel 4004chip, developed in 1971, located all the components of the computer - from the central processing unit and memory to input/output controls - on a single chip.

Abbreviation of central processing unit, and pronounced as separate letters. The CPU is the brains of the computer. Sometimes referred to simply as the processor or central processor, the CPU is where most calculations take place. In terms of computing power,the CPU is the most important element of a computer system.

On large machines, CPUs require one or more printed circuit boards. On personal computers and small workstations, the CPU is housed in a single chip called a microprocessor.

Two typical components of a CPU are:

- The arithmetic logic unit (ALU), which performs arithmetic and logical operations.

- The control unit, which extracts instructions from memory and decodes and executes them, calling on the ALU when necessary.

As these small computers became more powerful, they could be linked together to form networks, which eventually led to the development of the Internet. Fourth generation computers also saw the development of GUI's, the mouse and handheld devices

Fifth Generation - Present and Beyond: Artificial Intelligence

Fifth Generation - Present and Beyond: Artificial Intelligence

Fifth generation computing devices, based on artificial intelligence, are still in development,though there are some applications, such as voice recognition, that are being used today.Artificial Intelligence is the branch of computer science concerned with making computers behave like humans. The term was coined in 1956 by John McCarthy at the Massachusetts Institute of Technology. Artificial intelligence includes:

- Games Playing: programming computers to play games such as chess and checkers

- Expert Systems: programming computers to make decisions in real-life situations (for example, some expert systems help doctors diagnose diseases based on symptoms)

- Natural Language: programming computers to understand natural human languages

- Neural Networks: Systems that simulate intelligence by attempting to reproduce the types of physical connections that occur in animal brains

- Robotics: programming computers to see and hear and react to other sensory stimuli

In the area of robotics, computers are now widely used in assembly plants, but they are capable only of very limited tasks. Robots have great difficulty identifying objects based on appearance or feel, and they still move and handle objects clumsily.

Natural-language processing offers the greatest potential rewards because it would allow people to interact with computers without needing any specialized knowledge. You could simply walk up to a computer and talk to it. Unfortunately, programming computers to understand natural languages has proved to be more difficult than originally thought. Some rudimentary translation systems that translate from one human language to another are in existence, but they are not nearly as good as human translators.

There are also voice recognition systems that can convert spoken sounds into written words, but they do not understand what they are writing; they simply take dictation. Even these systems are quite limited -- you must speak slowly and distinctly.

In the early 1980s, expert systems were believed to represent the future of artificial intelligence and of computers in general. To date, however, they have not lived up to expectations. Many expert systems help human experts in such fields as medicine and engineering, but they are very expensive to produce and are helpful only in special situations.

Today, the hottest area of artificial intelligence is neural networks, which are proving successful in an umber of disciplines such as voice recognition and natural-language processing.

There are several programming languages that are known as AI languages because they are used almost exclusively for AI applications. The two most common are LISP and Prolog.

Voice Recognition

Voice Recognition

The field of computer science that deals with designing computer systems that can recognize spoken words. Note that voice recognition implies only that the computer can take dictation, not that it understands what is being said. Comprehending human languages falls under a different field of computer science called natural language processing. A number of voice recognition systems are available on the market. The most powerful can recognize thousands of words. However, they generally require an extended training session during which the computer system becomes accustomed to a particular voice and accent.Such systems are said to be speaker dependent.Many systems also require that the speaker speak slowly and distinctly and separate each word with a short pause. These systems are called discrete speech systems. Recently, great strides have been made in continuous speech systems -- voice recognition systems that allow you to speak naturally. There are now several continuous-speech systems available for personal computers.

Because of their limitations and high cost, voice recognition systems have traditionally been used only in a few specialized situations. For example, such systems are useful in instances when the user is unable to use a keyboard to enter data because his or her hands are occupied or disabled. Instead of typing commands, the user can simply speak into a headset. Increasingly, however, as the cost decreases and performance improves, speech recognition systems are entering the mainstream and are being used as an alternative to keyboards.

The use of parallel processing and superconductors is helping to make artificial intelligence a reality. Parallel processing is the simultaneous use of more than one CPU to execute a program. Ideally, parallel processing makes a program run faster because there are more engines (CPUs) running it. In practice, it is often difficult to divide a program in such a way that separate CPUs can execute different portions without interfering with each other.

Most computers have just one CPU, but some models have several. There are even computers with thousands of CPUs. With single-CPU computers, it is possible to perform parallel processing by connecting the computers in a network. However, this type of parallel processing requires very sophisticated software called distributed processing software.

Note that parallel processing differs from multitasking, in which a single CPU executes several programs at once.

Parallel processing is also called parallel computing.

Quantum computation and molecular and nano-technology will radically change the face of computers in years to come. First proposed in the 1970s, quantum computing relies on quantum physics by taking advantage of certain quantum physics properties of atoms or nuclei that allow them to work together as quantum bits, or qubits, to be the computer's processor and memory. By interacting with each other while being isolated from the external environment,qubits can perform certain calculations exponentially faster than conventional computers.

Qubits do not rely on the traditional binary nature of computing. While traditional computers encode information into bits using binary numbers, either a 0or 1, and can only do calculations on one set of numbers at once, quantum computers encode information as a series of quantum-mechanical states such as spin directions of electrons or polarization orientations of a photon that might represent a 1 or a 0, might represent a combination of the two or might represent a number expressing that the state of the qubit is somewhere between 1 and 0, or a superposition of many different numbers at once. A quantum computer can doan arbitrary reversible classical computation on all the numbers simultaneously, which a binary system cannot do, and also has some ability to produce interference between various different numbers. By doing a computation on many different numbers at once,then interfering the results to get a single answer, a quantum computer has the potential to be much more powerful than a classical computer of the same size.In using only a single processing unit, a quantum computer can naturally perform myriad operations in parallel.

Quantum computing is not well suited for tasks such as word processing and email, but it is ideal for tasks such as cryptography and modeling and indexing very large databases.

Nanotechnology is a field of science whose goal is to control individual atoms and molecules to create computer chips and other devices that are thousands of times smaller than current technologies permit. Current manufacturing processes use lithography to imprint circuits on semiconductor materials. While lithography has improved dramatically over the last two decades -- to the point where some manufacturing plants can produce circuits smaller than one micron(1,000 nanometers) -- it still deals with aggregates of millions of atoms. It is widely believed that lithography is quickly approaching its physical limits. To continue reducing the size of semiconductors, new technologies that juggle individual atoms will be necessary. This is the realm of nanotechnology.

Although research in this field dates back to Richard P. Feynman's classic talk in 1959, the term nanotechnology was first coined by K. Eric Drexler in1986 in the book Engines of Creation.

In the popular press, the term nanotechnology is sometimes used to refer to any sub-micron process,including lithography. Because of this, many scientists are beginning to use the term molecular nanotechnology when talking about true nanotechnology at the molecular level.

The goal of fifth-generation computing is to develop devices that respond to natural language input and are capable of learning and self-organization.

Here natural language means a human language. For example, English, French, and Chinese are natural languages. Computer languages, such as FORTRAN and C,are not.

Probably the single most challenging problem in computer science is to develop computers that can understand natural languages. So far, the complete solution to this problem has proved elusive, although great deal of progress has been made. Fourth-generation languages are the programming languages closest to natural languages.

(Source : http://www.techiwarehouse.com/cms/engine.php?page_id=a046ee08 )

0 Response to "Generations of Computer"

Post a Comment